Get the free Practical Issues of Crawling Large Web Collections - chato

Show details

This document discusses anomalies encountered during large web crawls and their implications on web crawler design and information findability. It aims to help web crawler designers and web application

We are not affiliated with any brand or entity on this form

Get, Create, Make and Sign practical issues of crawling

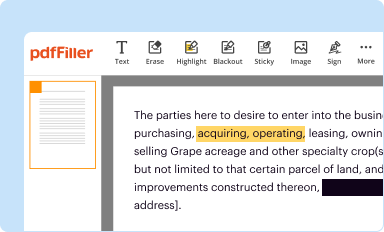

Edit your practical issues of crawling form online

Type text, complete fillable fields, insert images, highlight or blackout data for discretion, add comments, and more.

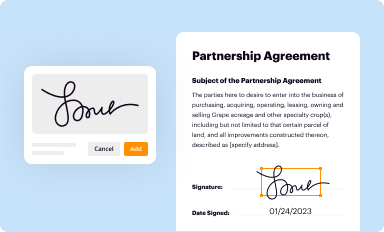

Add your legally-binding signature

Draw or type your signature, upload a signature image, or capture it with your digital camera.

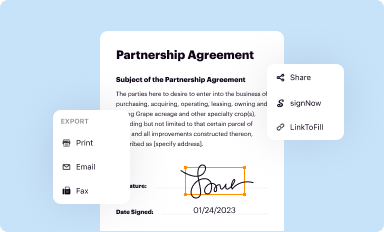

Share your form instantly

Email, fax, or share your practical issues of crawling form via URL. You can also download, print, or export forms to your preferred cloud storage service.

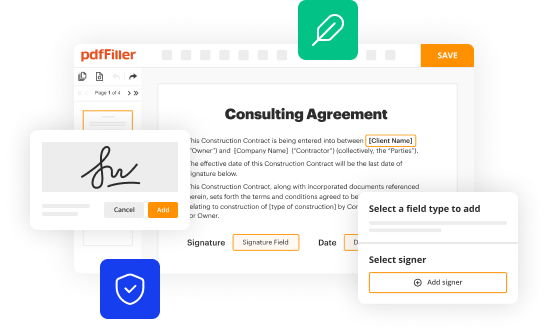

How to edit practical issues of crawling online

Use the instructions below to start using our professional PDF editor:

1

Log in to your account. Click on Start Free Trial and register a profile if you don't have one.

2

Simply add a document. Select Add New from your Dashboard and import a file into the system by uploading it from your device or importing it via the cloud, online, or internal mail. Then click Begin editing.

3

Edit practical issues of crawling. Replace text, adding objects, rearranging pages, and more. Then select the Documents tab to combine, divide, lock or unlock the file.

4

Get your file. When you find your file in the docs list, click on its name and choose how you want to save it. To get the PDF, you can save it, send an email with it, or move it to the cloud.

With pdfFiller, it's always easy to work with documents.

Uncompromising security for your PDF editing and eSignature needs

Your private information is safe with pdfFiller. We employ end-to-end encryption, secure cloud storage, and advanced access control to protect your documents and maintain regulatory compliance.

How to fill out practical issues of crawling

How to fill out Practical Issues of Crawling Large Web Collections

01

Identify the scope of the web collection you want to crawl.

02

Choose the right crawling tools and software suitable for large collections.

03

Determine the frequency and timing of your crawl to avoid overloading the servers.

04

Set up a robust architecture that can handle data storage and processing needs.

05

Implement relevant protocols and permissions, such as robots.txt, to respect web scraping policies.

06

Design efficient algorithms to filter and prioritize the data you intend to collect.

07

Monitor the crawl process continuously to troubleshoot any issues that arise in real-time.

08

Perform data validation and cleaning to ensure the usability of the collected web data.

Who needs Practical Issues of Crawling Large Web Collections?

01

Researchers and academics studying web data and its properties.

02

Data scientists looking to build datasets for machine learning models.

03

Businesses analyzing market trends through web data.

04

SEO professionals aiming to gather insights from competitor websites.

05

Developers working on web archiving projects or building search engines.

Fill

form

: Try Risk Free

People Also Ask about

What is the web crawling method?

Time-wise not much apart, in 1993, the first concept of crawling was born. The Wanderer, more precisely - the World Wide Web Wanderer developed by Matthew Gray at the Massachusetts Institute of Technology was a first of its kind, Perl-based web crawler whose sole purpose was to measure out the size of the web.

What is the purpose of web crawling?

A Web crawler starts with a list of URLs to visit. Those first URLs are called the seeds. As the crawler visits these URLs, by communicating with web servers that respond to those URLs, it identifies all the hyperlinks in the retrieved web pages and adds them to the list of URLs to visit, called the crawl frontier.

What are the problems with web crawlers?

Crawlers often encounter duplicate pages due to errors or intentional duplication by website owners. This can lead to inaccurate indexing and wasted resources as crawlers struggle to determine which version of a page should be indexed.

What are the challenges of web crawling?

Web crawlers access sites via the internet and gather information about each page, including titles, images, keywords, and links within the page. This data is used by search engines to build an index of web pages, allowing the engine to return faster and more accurate search results for users.

What are the limitations of web scraping?

Understanding the challenges of data scraping Difficult website structures (and when they change) Anti-scraping technologies. IP-based bans. Robots. txt issues. Honeypot traps. Data quality assurance. Avoiding copyright infringement. Following data protection laws.

What are the challenges of web application?

Top 5 Challenges in Web Application Development User Interface and User Experience. Think a decade ago, the web was a completely different place. Scalability. Scalability is neither performance nor it's about making good use of computing power and bandwidth. Performance. Knowledge of Framework and Platforms.

What are the risks of web scraping?

Data breaches and privacy violations Scraping bots can unintentionally (or intentionally) collect sensitive information, such as user credentials, email addresses, and financial data. This may result in data breaches and privacy violations, placing both businesses and users at risk.

For pdfFiller’s FAQs

Below is a list of the most common customer questions. If you can’t find an answer to your question, please don’t hesitate to reach out to us.

What is Practical Issues of Crawling Large Web Collections?

Practical Issues of Crawling Large Web Collections refers to the challenges and considerations faced when attempting to systematically gather data from extensive web resources, including issues like managing bandwidth, navigating site structures, adhering to legal regulations, and ensuring data accuracy.

Who is required to file Practical Issues of Crawling Large Web Collections?

Individuals or organizations that conduct large-scale web crawling activities are required to file Practical Issues of Crawling Large Web Collections. This often includes researchers, data scientists, and companies involved in data extraction for analytics or indexing.

How to fill out Practical Issues of Crawling Large Web Collections?

To fill out Practical Issues of Crawling Large Web Collections, one needs to provide comprehensive details of their crawling plan, including the target URLs, the scale of the crawling effort, methods of data collection, and compliance measures with web standards and regulations.

What is the purpose of Practical Issues of Crawling Large Web Collections?

The purpose of Practical Issues of Crawling Large Web Collections is to ensure that crawlers operate efficiently and ethically, optimizing data collection methodologies while minimizing disruption to web services and adhering to legal and privacy standards.

What information must be reported on Practical Issues of Crawling Large Web Collections?

Information that must be reported includes the crawler's identification, scope of data to be collected, expected frequency of requests, the target websites, compliance with robots.txt files, and measures taken to protect user data and privacy.

Fill out your practical issues of crawling online with pdfFiller!

pdfFiller is an end-to-end solution for managing, creating, and editing documents and forms in the cloud. Save time and hassle by preparing your tax forms online.

Practical Issues Of Crawling is not the form you're looking for?Search for another form here.

Relevant keywords

Related Forms

If you believe that this page should be taken down, please follow our DMCA take down process

here

.

This form may include fields for payment information. Data entered in these fields is not covered by PCI DSS compliance.