Get the free Is Apache Spark Scalable to Seismic Data Analytics and - geo-bigdata github

Show details

Is Apache Spark Scalable to Seismic Data Analytics

and Computations?

Yutong An, Lei Huang.

Liq I

Department of Computer Science

Prairie View A&M University

Prairie View, TX

Email: Ryan×student.Pam.edu,

We are not affiliated with any brand or entity on this form

Get, Create, Make and Sign is apache spark scalable

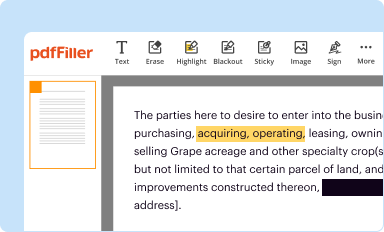

Edit your is apache spark scalable form online

Type text, complete fillable fields, insert images, highlight or blackout data for discretion, add comments, and more.

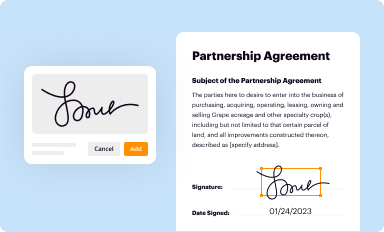

Add your legally-binding signature

Draw or type your signature, upload a signature image, or capture it with your digital camera.

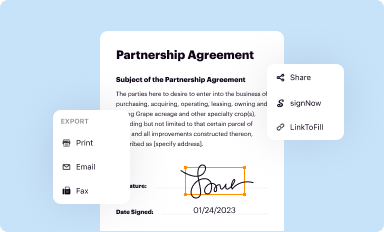

Share your form instantly

Email, fax, or share your is apache spark scalable form via URL. You can also download, print, or export forms to your preferred cloud storage service.

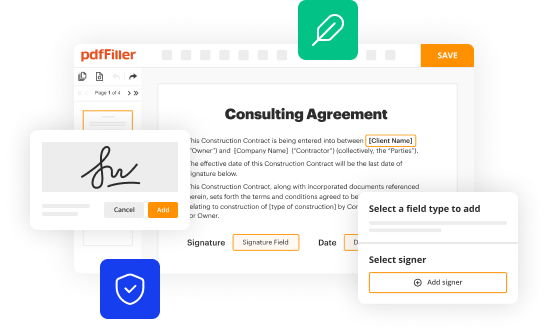

Editing is apache spark scalable online

Here are the steps you need to follow to get started with our professional PDF editor:

1

Log in to your account. Click on Start Free Trial and sign up a profile if you don't have one yet.

2

Prepare a file. Use the Add New button to start a new project. Then, using your device, upload your file to the system by importing it from internal mail, the cloud, or adding its URL.

3

Edit is apache spark scalable. Rearrange and rotate pages, add and edit text, and use additional tools. To save changes and return to your Dashboard, click Done. The Documents tab allows you to merge, divide, lock, or unlock files.

4

Get your file. Select the name of your file in the docs list and choose your preferred exporting method. You can download it as a PDF, save it in another format, send it by email, or transfer it to the cloud.

With pdfFiller, it's always easy to deal with documents.

Uncompromising security for your PDF editing and eSignature needs

Your private information is safe with pdfFiller. We employ end-to-end encryption, secure cloud storage, and advanced access control to protect your documents and maintain regulatory compliance.

How to fill out is apache spark scalable

01

Apache Spark is inherently scalable due to its distributed computing model. It can handle large-scale data processing and analytics tasks by efficiently distributing the workload across a cluster of machines.

02

To determine if Apache Spark is scalable for a specific use case, you need to consider the size of the data you want to process and the computing resources available in your cluster.

03

Start by evaluating the workload size. If you have terabytes or even petabytes of data to process, Apache Spark can effectively handle it by partitioning the data into smaller chunks and processing them in parallel across multiple nodes in the cluster.

04

Assess the available computing resources in your cluster. Spark can take advantage of a wide range of hardware configurations, from a single machine to a cluster of thousands of machines. The more resources you have, the more scalable your Spark application can be.

05

To take full advantage of scalability, you need to properly configure your Spark cluster. This includes specifying the number of executor instances, memory allocation per executor, and the number of cores per executor. These settings ensure efficient resource utilization and prevent resource bottlenecks that could hinder scalability.

06

It's important to note that while Apache Spark is scalable, scalability alone might not be the primary concern for every use case. Some applications, such as small-scale data processing or rapid prototyping, may not require the full scalability potential of Apache Spark. In such cases, other factors like ease of use, development speed, and cost-effectiveness may take precedence.

Who needs Apache Spark scalability?

01

Organizations dealing with large volumes of data: Businesses in domains like e-commerce, social media, finance, and healthcare generate massive amounts of data daily. Apache Spark's scalability ensures these organizations can effectively process and analyze their data, enabling them to make data-driven decisions and gain valuable insights.

02

Data scientists and analysts: Professionals working in the field of data science and analytics often need to process large datasets to perform complex computations, build machine learning models, or derive meaningful insights. Apache Spark's scalability allows them to efficiently handle data-intensive tasks, reducing processing time and increasing productivity.

03

Big data platforms and service providers: Companies that provide big data platforms or cloud-based services often leverage Apache Spark's scalability to handle the data processing and analytics needs of their clients. By offering a scalable solution, they can accommodate a wide range of use cases and provide reliable and efficient services.

04

Researchers and academicians: In the scientific community, scalability is crucial when dealing with large datasets and complex computational models. Apache Spark's scalability enables researchers and academicians to process and analyze extensive scientific data, facilitating advancements in various fields such as genomics, physics, and climate research.

Fill

form

: Try Risk Free

For pdfFiller’s FAQs

Below is a list of the most common customer questions. If you can’t find an answer to your question, please don’t hesitate to reach out to us.

What is is apache spark scalable?

Apache Spark is scalable because it can handle large amounts of data and distribute processing across multiple nodes in a cluster.

Who is required to file is apache spark scalable?

Organizations or individuals using Apache Spark for large-scale data processing are required to consider its scalability.

How to fill out is apache spark scalable?

To determine if Apache Spark is scalable, one must analyze its ability to handle increasing data loads efficiently.

What is the purpose of is apache spark scalable?

The purpose of Apache Spark being scalable is to ensure that it can grow with the data processing needs of users.

What information must be reported on is apache spark scalable?

Information regarding how Apache Spark can distribute tasks, handle data partitioning, and manage resources efficiently.

How can I send is apache spark scalable for eSignature?

When you're ready to share your is apache spark scalable, you can send it to other people and get the eSigned document back just as quickly. Share your PDF by email, fax, text message, or USPS mail. You can also notarize your PDF on the web. You don't have to leave your account to do this.

Can I edit is apache spark scalable on an iOS device?

No, you can't. With the pdfFiller app for iOS, you can edit, share, and sign is apache spark scalable right away. At the Apple Store, you can buy and install it in a matter of seconds. The app is free, but you will need to set up an account if you want to buy a subscription or start a free trial.

How do I complete is apache spark scalable on an iOS device?

Download and install the pdfFiller iOS app. Then, launch the app and log in or create an account to have access to all of the editing tools of the solution. Upload your is apache spark scalable from your device or cloud storage to open it, or input the document URL. After filling out all of the essential areas in the document and eSigning it (if necessary), you may save it or share it with others.

Fill out your is apache spark scalable online with pdfFiller!

pdfFiller is an end-to-end solution for managing, creating, and editing documents and forms in the cloud. Save time and hassle by preparing your tax forms online.

Is Apache Spark Scalable is not the form you're looking for?Search for another form here.

Relevant keywords

Related Forms

If you believe that this page should be taken down, please follow our DMCA take down process

here

.

This form may include fields for payment information. Data entered in these fields is not covered by PCI DSS compliance.